We're Encore and we build tools to help developers create distributed systems and event-driven applications. In this post, you're going on an interactive journey to understand how publish/subscribe messaging works and why it matters for backend systems.

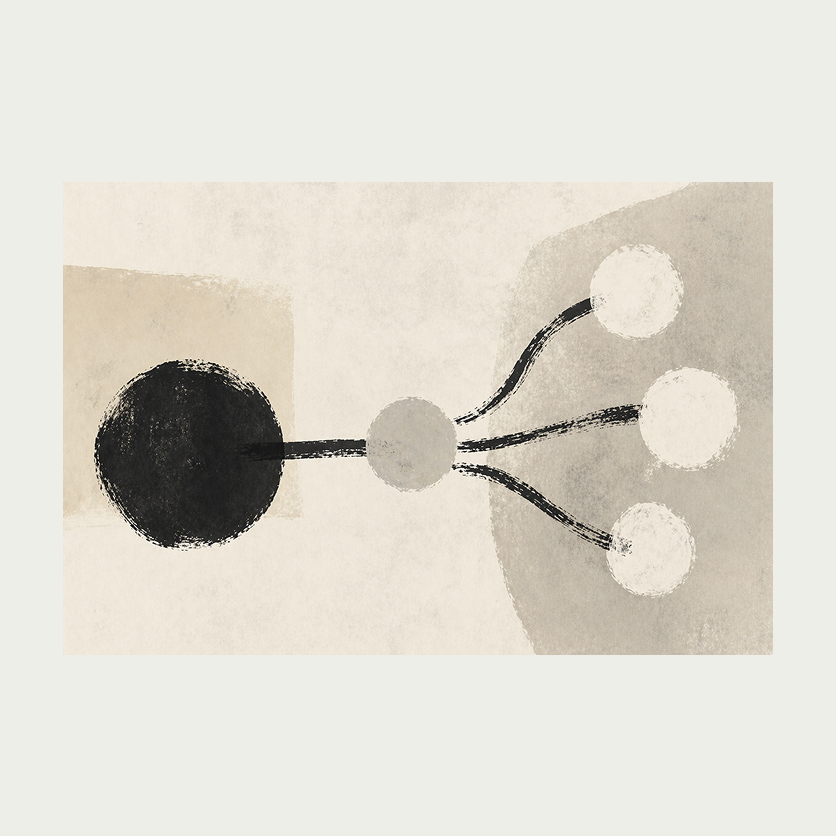

Most backend systems start with direct calls. Service A needs something from Service B, so it calls it directly. This works until it doesn't. What happens when Service B is slow? What if Service B goes down? What if you need Service C to also react when something happens in Service A?

Pub/Sub solves these problems by decoupling the sender from the receiver. Instead of calling services directly, you publish messages to a topic, and any number of subscribers process those messages independently. This is the pattern behind most event-driven architectures, and it's built into every major cloud provider: AWS SNS+SQS, Google Cloud Pub/Sub, and Azure Service Bus all implement it.

In this post, we'll build up from direct calls to a full Pub/Sub system. Each section has an interactive demo. Click the buttons to see how messages flow through each pattern.

The problem with direct calls

Let's start with the simplest possible setup: one service calling another directly. Click "Send" to send a request from the publisher to the consumer.

When the consumer is online, everything works. Requests arrive, get processed, and return a response. Try toggling the consumer offline and sending more requests. The messages fail because the publisher has no fallback. It's tightly coupled to the consumer being available.

This is fine for synchronous request/response patterns where the caller needs an immediate answer. But many backend operations don't need that. Order confirmations, analytics events, notification triggers, audit logs — these are things that need to happen eventually, but the original request shouldn't fail if one of the downstream systems is temporarily unavailable.

Topics and fan-out

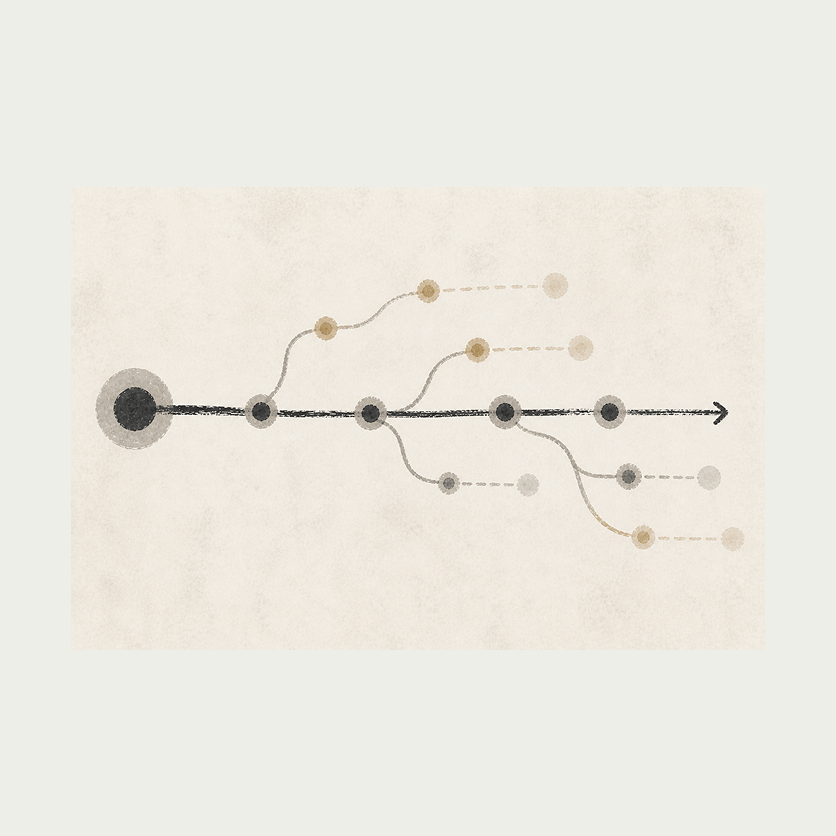

A topic sits between publishers and subscribers. The publisher sends a message to the topic without knowing or caring who will receive it. The topic then delivers a copy of that message to every subscriber. This is called fan-out.

Click "Publish" to send a message. Watch it arrive at the topic and then fan out to all three subscribers independently.

Each subscriber processes messages at its own pace. A slow subscriber doesn't block the others. If you publish several messages quickly, you'll see subscribers processing them in parallel at different speeds.

This changes how you design systems. Instead of Service A calling Service B, then Service C, then Service D in sequence (where each call adds latency and any failure cascades), Service A publishes once and all downstream services react independently. Adding a new subscriber doesn't require changing the publisher at all.

In practice, this is how you'd set up something like order processing: an order is placed, a message is published to an "order-created" topic, and separate subscribers handle payment processing, inventory updates, email confirmations, and analytics, each independently.

Delivery guarantees

What happens when a subscriber crashes while processing a message? The answer depends on the delivery guarantee.

The demo below shows two systems side by side. Both receive the same messages, but they handle failure differently. Try publishing a few messages, then click "Crash" on a subscriber to see what happens.

At-most-once (left): the message is marked as delivered the moment it's sent to the subscriber. If the subscriber crashes before finishing, the message is gone. Simple, fast, but you can lose data.

At-least-once (right): the message stays in the topic until the subscriber acknowledges it. If the subscriber crashes, the message gets redelivered after a timeout. You never lose a message, but a subscriber might process the same message twice.

Most production systems use at-least-once delivery because losing messages is worse than processing one twice. The tradeoff is that your subscriber code needs to be idempotent — processing the same message twice should produce the same result as processing it once. In practice, this usually means checking whether you've already handled a message ID before doing the work.

AWS SQS, Google Cloud Pub/Sub, and most message brokers default to at-least-once delivery. At-most-once is common for fire-and-forget use cases like analytics events where losing an occasional data point is acceptable.

Ordering

By default, most Pub/Sub systems don't guarantee order. Publish A, B, C to a topic and subscribers might see them as A, C, B or B, A, C. Brokers distribute messages across workers for throughput, and different messages can finish processing in any order.

When ordering matters, you have two options. Ordered queues (AWS SQS FIFO queues, Google Cloud Pub/Sub with ordering keys) guarantee first-in-first-out delivery, at the cost of lower throughput since messages sharing an ordering key can't be processed in parallel. The other option is to let messages arrive out of order and reconcile in application code, typically by tagging each message with a sequence number or timestamp.

Usually you don't need global ordering. Scope ordering to the smallest unit that cares (per user, per account, per entity) rather than across the whole topic, so throughput stays high for everything else.

Dead letter queues

Some messages can't be processed no matter how many times you retry them. Maybe the message references a record that was deleted, or the payload is malformed. Without a safety valve, these "poison messages" would be retried forever, blocking the queue.

A dead letter queue (DLQ) catches these messages. After a configurable number of failed processing attempts, the message is moved to the DLQ instead of being retried again. This keeps the main queue flowing while preserving the failed messages for investigation.

Click "Publish message" below to send a normal message. Click "Publish poison" to send one that will always fail processing. Poison messages are shown in orange. Watch what happens when the subscriber fails to process them.

The subscriber attempts to process each message. Normal messages succeed on the first try. Poison messages fail every time. After 3 failed attempts, the message is moved to the dead letter queue. Meanwhile, normal messages continue flowing through without being blocked.

In production, you'd set up alerts on the DLQ and periodically inspect it to understand what's failing. Sometimes the fix is a code change, sometimes it's a data correction, and sometimes you just need to replay the messages after fixing the underlying issue.

How this maps to real infrastructure

The patterns we've explored here map directly to managed cloud services:

| Concept | AWS | GCP |

|---|---|---|

| Topic | SNS (Simple Notification Service) | Cloud Pub/Sub Topic |

| Subscription | SQS (Simple Queue Service) | Cloud Pub/Sub Subscription |

| Dead letter queue | SQS DLQ (redrive policy) | Cloud Pub/Sub DLQ (dead-letter topic) |

| At-least-once | Default for SQS | Default for Cloud Pub/Sub |

| Ordering | SQS FIFO queues | Cloud Pub/Sub ordering keys |

| Fan-out | SNS → multiple SQS queues | Topic → multiple Subscriptions |

Setting these up manually involves configuring topics, subscriptions, IAM policies, retry policies, DLQ redrive policies, and connecting everything together. With a framework like Encore, you declare the topic and subscription in your application code and the infrastructure is provisioned automatically with sensible defaults:

import { Topic, Subscription } from "encore.dev/pubsub";

// Provisions SNS+SQS on AWS or GCP Pub/Sub on GCP with sensible defaults (in-memory locally).

const orderEvents = new Topic<OrderEvent>("order-events", {

deliveryGuarantee: "at-least-once",

});

// Each subscription gets its own queue and processes messages independently.

const _ = new Subscription(orderEvents, "send-confirmation", {

handler: async (event) => {

await sendConfirmationEmail(event.orderId);

},

});

The delivery guarantee, retry policy, and DLQ configuration are handled by the framework. You write the handler, and the infrastructure follows from the code.

Further reading

If this post made you curious about Pub/Sub and event-driven systems, here are some good next steps:

- Queueing: An interactive study of queueing strategies — the companion to this post, covering HTTP request queuing

- Retries: An interactive study of common retry methods — another interactive deep dive into retry patterns

- Event-Driven Architecture — a broader look at building event-driven systems

- Encore Pub/Sub documentation — how to use Pub/Sub in Encore

Related Articles