What We Learned Building a Rust Runtime for TypeScript

67,000 lines of Rust, two years, and the non-obvious problems of making Node.js and Rust work together in the same process.

Encore started as a Go framework with a Go runtime, Go CLI, Go parser, and Go compiler. When we decided to support TypeScript, the straightforward choice would have been to write the runtime in TypeScript too, or extend the Go runtime with some kind of bridge, but we ended up writing a new runtime from scratch in Rust.

There were two reasons for this beyond what the Go sidecar prototype showed us (more on that below). First, we knew we wanted to extend Encore to more languages over time, and we'd seen projects like Prisma and Pydantic successfully use a Rust core with bindings into Node.js and Python respectively. Writing the core logic once in Rust and binding it to each language runtime meant we wouldn't be reimplementing infrastructure handling for every language we add. Second, Node.js is fundamentally single-threaded. By moving everything that isn't business logic into Rust, the HTTP request lifecycle, database connection management, pub/sub, tracing, all of it runs fully multi-threaded on tokio. That's a performance gain that isn't achievable within Node.js itself.

Two years and 67,000 lines later, the runtime handles the full HTTP request lifecycle (routing, request parsing and validation, response serialization), database connection pooling and querying, pub/sub across three cloud providers, distributed tracing, metrics collection, object storage, caching, and an API gateway powered by Pingora. The TypeScript code your application runs is the business logic, and everything underneath it is Rust. This post walks through the decisions that got us here, the problems that weren't obvious going in, and what we'd do differently.

Why not just extend the Go runtime

The Go runtime worked well for Go applications and still does. It compiles into the application binary and handles infrastructure concerns at the framework level. The obvious approach for TypeScript support would have been to run the Go runtime as a sidecar process alongside Node.js, with the two communicating over IPC.

We prototyped this and the latency overhead of serializing every database query, pub/sub message, and trace event across a process boundary added up fast. A single API request that touches a database and publishes an event would cross the IPC boundary six or seven times, and in benchmarks the sidecar approach added 2-4ms of overhead per request just from serialization and context switching, before any actual work happened.

The other issue was operational. Two processes means two things to monitor, two things that can crash independently, two sets of logs to correlate. For local development that's manageable, but in production across dozens of services the failure modes multiply.

So the runtime needed to live in the same process as the Node.js event loop, which meant either writing it in C/C++ with N-API bindings or in Rust with napi-rs. Rust gave us the same safety guarantees (memory safety, thread safety, no data races) that the Go runtime had, plus access to the async ecosystem (tokio) for handling thousands of concurrent connections without blocking the Node.js event loop.

The first 10,000 lines

TypeScript support was developed incrementally over several months of internal work across many pull requests. The public release (#1073) shipped three Rust crates together: the core runtime, the JavaScript NAPI bindings, and a TypeScript parser. The framework only works when all of these pieces are in place, so the release had to be atomic even though the development wasn't.

The core runtime (runtimes/core) is structured as a set of managers, each responsible for one infrastructure concern:

pub struct Runtime {

api: api::Manager, // HTTP lifecycle, routing, auth

sqldb: sqldb::Manager, // Database connections, pooling, querying

pubsub: pubsub::Manager, // Topic publishing and subscriptions

objects: objects::Manager, // Object storage (S3, GCS)

metrics: metrics::Manager, // Collection and export

secrets: secrets::Manager, // Secret retrieval

// ...

}

Each manager initializes lazily from two separate protobuf configurations. The first is the application metadata, which describes the system itself. This is generated at compile time by the TypeScript parser, which reads your application code and extracts the infrastructure declarations:

// Application metadata — a complete description of the system. // Generated at compile time by the TypeScript/Go parser. message Data { string module_path = 1; repeated Service svcs = 5; optional AuthHandler auth_handler = 6; repeated CronJob cron_jobs = 7; repeated PubSubTopic pubsub_topics = 9; repeated CacheCluster cache_clusters = 11; repeated SQLDatabase sql_databases = 14; repeated Gateway gateways = 15; repeated Bucket buckets = 17; // ... }

The second is the runtime configuration, which tells the runtime how to actually run: which cloud provider implementation to use for each pub/sub topic, how to authenticate, where each database cluster lives, service discovery for inter-service calls, and observability settings. This is generated at deploy time based on the target environment:

// Runtime configuration — how the runtime is configured per environment. // Generated at deploy time. message RuntimeConfig { Environment environment = 1; // cloud provider, env type (dev/prod/test) Infrastructure infra = 2; // SQL clusters, pub/sub, Redis, secrets, buckets Deployment deployment = 3; // service discovery, auth methods, observability }

The separation matters because the same application metadata can be deployed to completely different environments (local development with NSQ and Docker Postgres, production with AWS SNS/SQS and RDS) just by swapping the runtime configuration. The application code doesn't change, and neither does the metadata that describes it.

Making Rust talk to JavaScript (and getting answers back)

The Node.js NAPI (N-API) is designed for calling into native code from JavaScript: you register a function, JavaScript calls it, you do work, you return a value. The harder direction is calling from native code into JavaScript, which is what we need when a pub/sub message arrives and needs to be dispatched to a TypeScript handler, or when an HTTP request needs to invoke a TypeScript endpoint function.

NAPI provides threadsafe functions for this, and napi-rs wraps them nicely. The problem is that the standard abstraction only supports sending arguments to JavaScript. It doesn't support capturing the return value. When a pub/sub message handler finishes processing, we need to know whether it succeeded or failed so we can ack or nack the message. When an API endpoint handler returns a response, we need that response back in Rust to serialize it and send it over the wire.

We forked napi-rs's ThreadSafeFunction to allow calling the JavaScript function manually and capturing its return value:

// Fork of threadsafe_function from napi-rs that allows calling

// JS function manually rather than only returning args.

// This enables us to use the return value of the function.

pub struct ThreadSafeCallContext<T: 'static> {

pub env: Env,

pub value: T,

pub callback: Option<JsFunction>,

}

The other subtlety is promises. TypeScript endpoint handlers are async functions that return promises. When we call into JavaScript and get back a value, we need to detect whether it's a promise and, if so, chain a .then() callback that resolves back into Rust via a tokio channel. This bridges JavaScript's async model to Rust's:

// Detect if the JS return value is a Promise, and if so,

// await it by chaining .then() that resolves into a Rust channel

fn await_promise(env: &Env, value: JsUnknown) -> Result<()> {

if value.is_promise()? {

// Chain .then() with a callback that sends the result

// through a tokio oneshot channel back to the Rust side

}

}

The relationship between Node.js and Rust in this setup is symbiotic rather than a traditional host/guest arrangement. Node.js (or Bun, which we also support) starts the process and imports the Encore library as a native module. The library then takes over infrastructure concerns: routing, database connections, tracing, pub/sub. Node.js owns the process lifecycle while Rust owns the infrastructure layer.

This creates an interesting edge case that Fredrik recently addressed: Rust futures can be dropped at any time (for example, when Cloud Run's request timeout closes the connection), but the JavaScript handler is still running on the Node.js event loop and can't be cancelled. The Rust side would never reach request_span_end, leaving the trace without a root span. The fix is a CancellationGuard that detects when its future is dropped and spawns a detached tokio task to await the JavaScript handler's completion:

/// Guard that spawns the handler into a background task on cancellation,

/// ensuring `request_span_end` is always emitted. On the normal path

/// (handler completes before cancellation), this is a no-op.

struct CancellationGuard<'a> {

call: &'a mut HandlerCall,

info: Option<CancellationGuardInfo>,

}

On the normal path where the handler completes before cancellation, the guard does nothing. When the future is dropped mid-flight, the guard's Drop implementation takes ownership of the in-flight HandlerCall and spawns it as a background task so the JavaScript side can finish cleanly and the trace span gets closed.

Embedding an API gateway with Pingora

Encore applications are composed of multiple services that communicate over the network. In production, a gateway routes external requests to the right service, handles authentication, CORS, and request validation. The typical approach would be to run this as a separate process, like nginx or envoy sitting in front of your services. We embedded it directly into the runtime instead.

We use Pingora, Cloudflare's open-source HTTP proxy library, as the gateway layer. Pingora is designed to be used as a library rather than a standalone binary, which is exactly what we needed. The gateway implements Pingora's ProxyHttp trait and plugs into the same process as the rest of the runtime:

pub struct Gateway {

inner: Arc<Inner>,

}

struct Inner {

service_registry: Arc<ServiceRegistry>,

router: router::Router,

cors_config: CorsHeadersConfig,

// Pub/sub push subscriptions proxied through the gateway

proxied_push_subs: HashMap<String, ProxiedPushSub>,

// ...

}

The gateway shares memory with the auth system, the service registry, and the trace collector. There's no serialization boundary between "the proxy decided this request is authenticated" and "the endpoint handler runs." The auth result is an Arc'd struct that gets passed directly to the handler.

A critical advantage of the in-process approach: user-defined auth handlers written in TypeScript can execute directly inside the gateway. Pingora calls into Node.js to run your auth handler, gets the result back, and the request continues through the gateway with the auth context attached. This would require serialization and IPC with a separate proxy process.

Pingora also gives us connection pooling to upstream services, HTTP/2 support, and graceful connection draining during deploys, all things we'd otherwise have to build ourselves or bolt on from the outside.

Fun fact

Pingora didn't support Windows when we started using it, and since Encore needs to run on Windows for local development, we added Windows support to Pingora and contributed it upstream. So today Pingora runs on Windows thanks to Encore. 🙌

Abstracting three cloud providers without generics hell

The pub/sub system needs to work identically across NSQ (local development), GCP Pub/Sub, and AWS SNS+SQS. The straightforward Rust approach would be heavy use of generics, but that would leak the provider choice into every type signature in the codebase. Instead we use trait objects:

trait Cluster: Debug + Send + Sync {

fn topic(&self, cfg: &PubSubTopic, publisher_id: xid::Id)

-> Arc<dyn Topic + 'static>;

fn subscription(&self, cfg: &PubSubSubscription, meta: &Subscription)

-> Arc<dyn Subscription + 'static>;

}

trait Topic: Debug + Send + Sync {

fn publish(&self, msg: MessageData, ordering_key: Option<String>)

-> Pin<Box<dyn Future<Output = Result<MessageId>> + Send + '_>>;

}

trait Subscription: Debug + Send + Sync {

fn subscribe(&self, handler: Arc<SubHandler>)

-> Pin<Box<dyn Future<Output = APIResult<()>> + Send + 'static>>;

}

Three traits, three implementations each. The manager picks the right cluster implementation at startup based on the runtime configuration and wraps everything in Arc<dyn Trait>, and the rest of the codebase never knows or cares which cloud provider is underneath.

Each provider has its own quirks. NSQ uses an actor-like pattern with a tokio-spawned producer loop and message channels. GCP uses tokio::sync::OnceCell for lazy client initialization, because the Cluster::topic() trait method needs to return synchronously (callers shouldn't have to await to get a topic reference), but creating a GCP client is an async operation that involves network calls for authentication. The OnceCell hides that async initialization behind a synchronous interface, deferring it to first use. AWS SQS/SNS needs publisher IDs for FIFO message ordering that the other providers don't care about.

All of these differences live inside their respective modules. The manager sees Arc<dyn Topic> and calls .publish(). The fact that under the hood one implementation is talking to a local NSQ daemon over TCP and another is making authenticated HTTPS calls to AWS is invisible. Object storage follows the same pattern, with implementations for S3-compatible storage and Google Cloud Storage.

A custom binary trace protocol

Every operation in an Encore application is traced automatically: API calls, database queries, pub/sub publishes, HTTP calls to external services, cache operations. The traces include timing, nesting (which database query happened inside which API call), request/response bodies, and error details. That's a lot of data generated on every request, so we implemented a custom binary trace protocol rather than constructing protobuf messages and encoding them. The EventBuffer is a purpose-built serializer that writes trace events into a contiguous byte buffer with variable-length integer encoding:

pub struct EventBuffer {

scratch: [u8; 10],

buf: BytesMut,

}

The scratch buffer avoids allocations for varint encoding. Trace IDs and span IDs are written as raw bytes on the wire (16 bytes and 8 bytes respectively) rather than encoded strings. This is the same approach OpenTelemetry uses for its binary protocol. The savings compound across millions of traces when every request generates dozens of span events.

We also needed to correlate monotonic time (for accurate duration measurement) with wall-clock time (for display). The TimeAnchor captures both tokio::time::Instant and chrono::DateTime at the same moment, then every subsequent event records only the monotonic offset. This avoids clock skew issues that plague distributed tracing systems where events appear to happen before their parents.

Trace sampling is configurable per-endpoint, per-service, and globally. The sampling decision happens at the start of the request and propagates to all child spans, so you never get a partial trace. A trace where you can see the API call but not the database query it made is worse than no trace at all, so this was a hard requirement.

Parsing TypeScript from Rust

The TypeScript parser (tsparser) is a separate Rust crate that reads your application's TypeScript source code and extracts the Encore framework declarations: which services exist, which endpoints they expose, which databases and pub/sub topics they declare, what the request and response types look like.

We built this on top of SWC's TypeScript parser for the AST, then wrote our own analysis passes on top. The parser needs to resolve imports, follow re-exports, and understand TypeScript's type system well enough to extract the shapes of request and response types, including generics, unions, and mapped types. We don't yet support the full complexity of TypeScript's type system, but we're continuously expanding coverage (recent additions include key remapping, method signatures, call signatures, and intersection types).

The parser is what makes infrastructure from code work. When you write new SQLDatabase("orders", { migrations: "./migrations" }), the parser sees that declaration, extracts the database name and migration path, and includes it in the application metadata protobuf that the runtime reads at startup. The runtime never parses TypeScript itself, it just receives a structured description of the application and configures itself accordingly.

The parser is also what enables the MCP server, the architecture diagrams, and the API documentation generation, since all of those consume the same metadata that the parser produces.

What we'd do differently

Invest in error context earlier. Rust's error handling is excellent at the language level, but early on we relied too heavily on anyhow::Context generically instead of defining specific error types. When something fails three layers deep in the pub/sub stack, "failed to publish message" is less useful than a structured error that includes the topic name, the message size, the provider, and the specific failure mode. We've been retrofitting better error types incrementally.

Ship the OpenTelemetry adapter sooner. Our custom trace format captures more information than is straightforward to represent in OpenTelemetry (request/response payloads, automatic redaction), and that's been valuable. But customers wanted to export traces to their existing observability stacks from day one, and the "you're not using an open standard" pushback is fair. We're building an OTel adapter now, but should have prioritized it earlier.

Double down on snapshot testing. We use snapshot testing, especially around the TypeScript type system parsing, and it's been one of the most effective testing strategies in the codebase. Every time we add support for a new TypeScript construct, snapshot tests catch regressions across the entire surface area. We should have invested more in this from the start.

Investigate using the Rust runtime for Go. Having a single runtime for both languages would reduce the maintenance burden significantly. But FFI between Go and Rust is harder than the Node.js case because of how Go's garbage collector works. Memory ownership at the Go-Rust boundary is tricky when Go can move objects during GC, and cgo has its own performance overhead. We've started exploring this but it's not straightforward.

The numbers

The Rust runtime processes billions of requests daily across Encore Cloud deployments, with many more from self-hosted companies whose traffic we don't see.

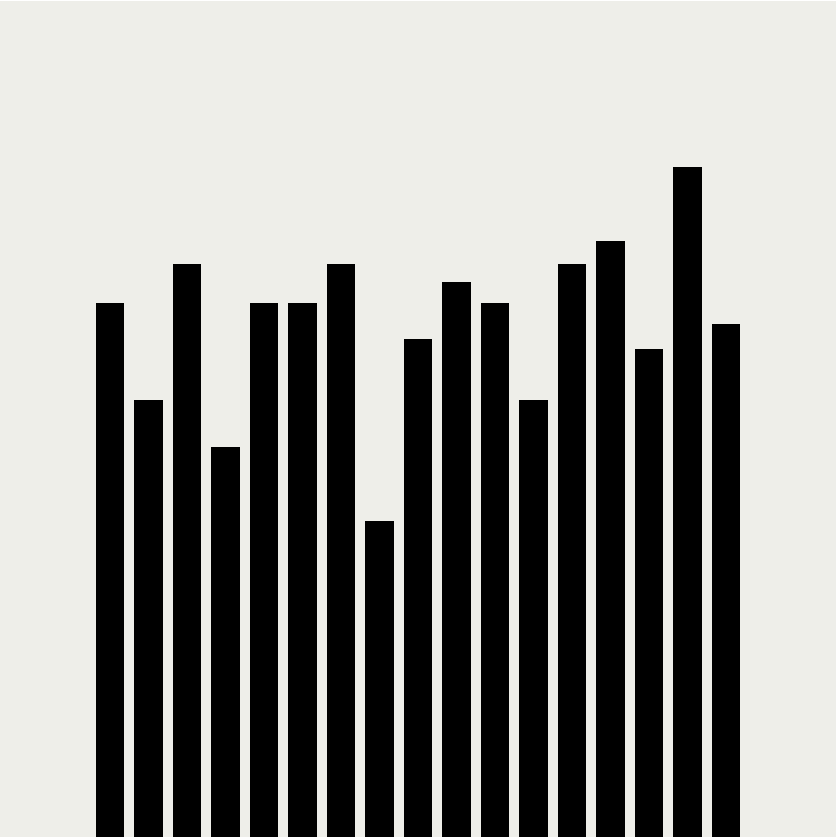

The performance impact of moving the infrastructure layer to Rust is measurable. In benchmarks using 150 concurrent workers over 10 seconds (best of five runs, load generated with oha):

| Framework | Requests/sec | Requests/sec (with validation) | P99 latency | P99 latency (with validation) |

|---|---|---|---|---|

| Encore.ts | 121,005 | 107,018 | 2.3ms | 3.6ms |

| Bun + Zod | 101,611 | 33,772 | 3.7ms | 14.9ms |

| Elysia + TypeBox | 82,617 | 35,124 | — | — |

| Hono + TypeBox | 71,202 | 33,150 | — | — |

| Fastify + Ajv | 62,207 | 48,397 | 4.1ms | 5.4ms |

| Express + Zod | 15,707 | 11,878 | 11.9ms | 18.2ms |

Encore.ts handles 9x the throughput of Express.js with 80% less latency. The gap widens with validation enabled because Encore validates requests at the Rust layer using the type information the parser already extracted, while the other frameworks run validation in JavaScript. For a deeper breakdown of where the performance comes from, see Encore.ts — 9x faster than Express.js.

The codebase is 67,077 lines of Rust across the core runtime, JavaScript bindings, TypeScript parser, and process supervisor. The Go runtime it sits alongside is 42,629 lines and still actively maintained for Go applications. They share the same protobuf-based configuration format but are otherwise independent codebases.

The runtime handles everything below the application layer: accepting connections, routing requests, parsing and validating inputs, managing database pools, publishing messages, collecting traces, exporting metrics. The TypeScript code in your application is pure business logic that doesn't import express, configure database connections, or set up pub/sub consumers. It declares what infrastructure it needs, and 67,000 lines of Rust make it happen.

Encore is open source. The runtime code lives in the main repo under runtimes/core (shared Rust runtime), runtimes/js (Node.js bindings), and tsparser (TypeScript analysis). If you want to see how any of this works, the code is there.

Related Articles