Encore.ts — 9x faster than Express.js, 3x faster than ElysiaJS & Hono

Combining Node.js with Async Rust for remarkable performance

We recently announced that Encore.ts — a backend framework for TypeScript — is generally available and ready to use in production, so we figured now is a great time to dig into some of the design decisions we made along the way, and how they lead to the remarkable performance numbers we've seen.

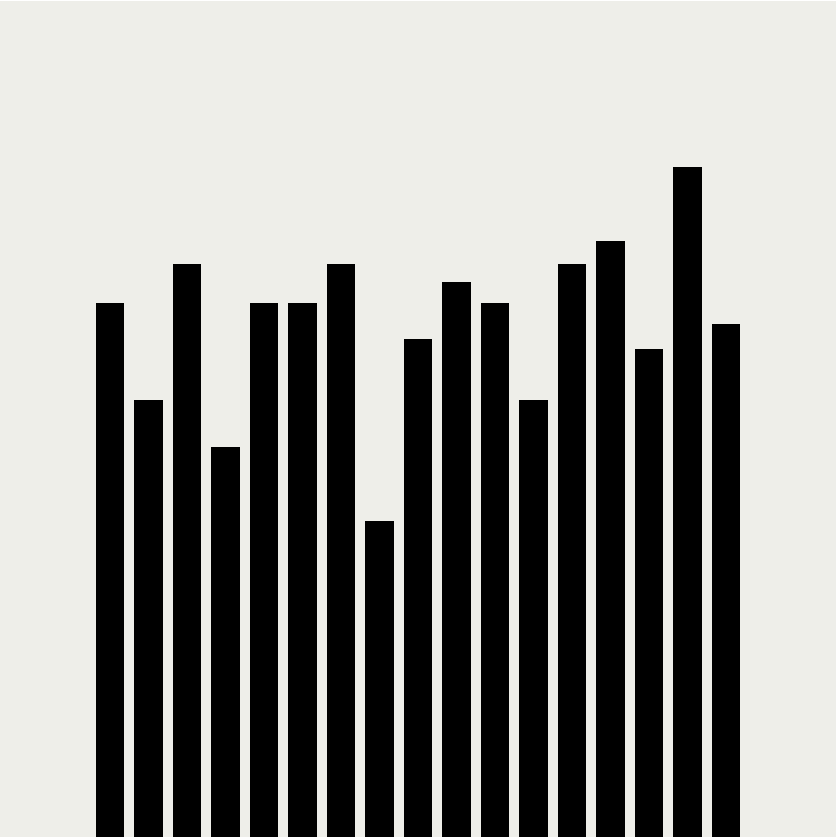

Performance benchmarks

We benchmarked Encore.ts, ElysiaJS, Hono, Bun, Fastify, and Express.js, both with and without schema validation.

For schema validation we used Zod where possible. For ElysiaJS and Hono we used TypeBox as this is the built-in solution provided. In the case of Fastify we used Ajv as the officially supported schema validation library.

For each benchmark we took the best result of five runs. Each run was performed by making as many requests as possible with 150 concurrent workers, over 10s. The load generation was performed with oha, a Rust and Tokio-based HTTP load testing tool.

Enough talk, let's see the numbers!

Encore.ts handles 9x more requests/sec than Express.js

Requests/sec

(Check out the benchmark code on GitHub.)

Encore.ts has 80% less response latency than Express.js

Response Latency (P99)

(Check out the benchmark code on GitHub.)

Aside from performance, Encore.ts achieves this while maintaining 100% compatibility with Node.js.

How is this possible? From our testing we've identified three major sources of performance, all related to how Encore.ts works under the hood.

Boost #1: Putting an event loop in your event loop

Node.js runs JavaScript code using a single-threaded event loop. Despite its single-threaded nature this is quite scalable in practice, since it uses non-blocking I/O operations and the underlying V8 JavaScript engine (that also powers Chrome) is extremely optimized.

But you know what's faster than a single-threaded event loop? A multi-threaded one.

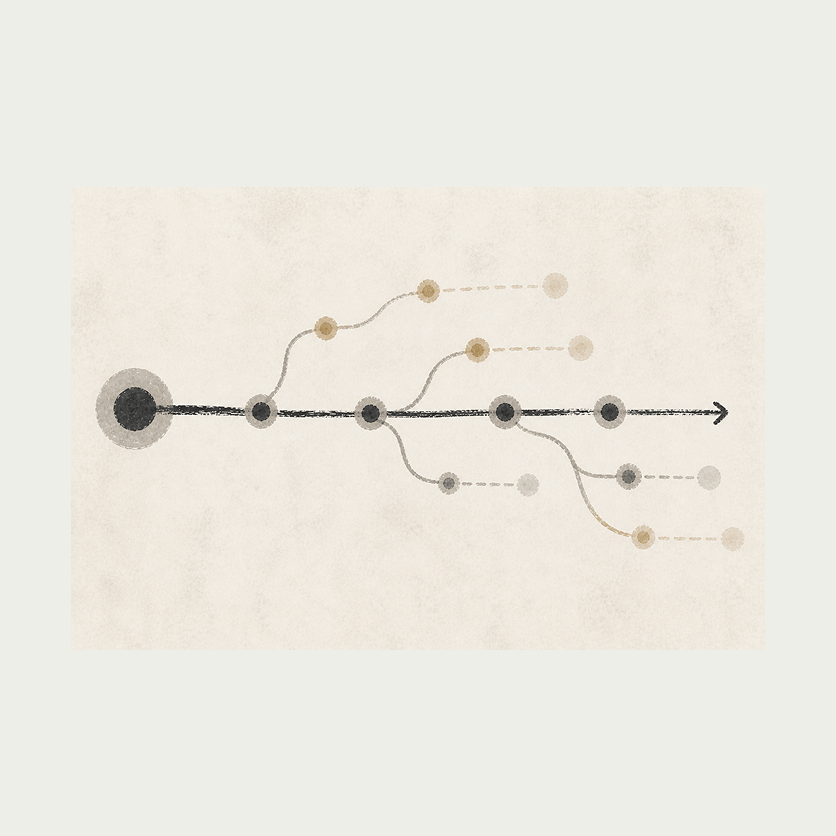

Encore.ts consists of two parts:

A Backend Framework for defining microservices, APIs, and declarative infrastructure.

A high-performance runtime, with a multi-threaded, asynchronous event loop written in Rust (using Tokio and Hyper).

The Encore Runtime handles all I/O like accepting and processing incoming HTTP requests. This runs as a completely independent event loop that utilizes as many threads as the underlying hardware supports.

Once the request has been fully processed and decoded, it gets handed over to the Node.js event-loop, and then takes the response from the API handler and writes it back to the client.

(Before you say it: Yes, we put an event loop in your event loop, so you can event-loop while you event-loop.)

Boost #2: Precomputing request schemas

Encore.ts, as the name suggests, is designed from the ground up for TypeScript. But you can't actually run TypeScript: it first has to be compiled to JavaScript, by stripping all the type information. This means run-time type safety is much harder to achieve, which makes it difficult to do things like validating incoming requests, leading to solutions like Zod becoming popular for defining API schemas at runtime instead.

Encore.ts works differently. With Encore, you define type-safe APIs using native TypeScript types:

import { api } from "encore.dev/api";

interface BlogPost {

id: number;

title: string;

body: string;

likes: number;

}

export const getBlogPost = api(

{ method: "GET", path: "/blog/:id", expose: true },

async ({ id }: { id: number }) => Promise<BlogPost> {

// ...

},

);

Encore.ts then parses the source code to understand the request and response schema that each API endpoint expects, including things like HTTP headers, query parameters, and so on. The schemas are then processed, optimized, and stored as a Protobuf file.

When the Encore Runtime starts up, it reads this Protobuf file and pre-computes a request decoder and response encoder, optimized for each API endpoint, using the exact type definition each API endpoint expects. In fact, Encore.ts even handles request validation directly in Rust, ensuring invalid requests never have to even touch the JS layer, mitigating many denial of service attacks.

Encore’s understanding of the request schema also proves beneficial from a performance perspective. JavaScript runtimes like Deno and Bun use a similar architecture to that of Encore's Rust-based runtime (in fact, Deno also uses Rust+Tokio+Hyper), but lack Encore’s understanding of the request schema. As a result, they need to hand over the un-processed HTTP requests to the single-threaded JavaScript engine for execution.

Encore.ts, on the other hand, handles much more of the request processing inside Rust, and only hands over the decoded request objects. By handling much more of the request life-cycle in multi-threaded Rust, the JavaScript event-loop is freed up to focus on executing application business logic instead of parsing HTTP requests, yielding an even greater performance boost.

Boost #3: Infrastructure Integrations

Careful readers might have noticed a trend: the key to performance is to off-load as much work from the single-threaded JavaScript event-loop as possible.

We've already looked at how Encore.ts off-loads most of the request/response lifecycle to Rust. So what more is there to do?

Well, backend applications are like sandwiches. You have the crusty top-layer, where you handle incoming requests. In the center you have your delicious toppings (that is, your business logic, of course). At the bottom you have your crusty data access layer, where you query databases, call other API endpoints, and so on.

We can't do much about the business logic — we want to write that in TypeScript, after all! — but there's not much point in having all the data access operations hogging our JS event-loop. If we moved those to Rust we'd further free up the event loop to be able to focus on executing our application code.

So that's what we did.

With Encore.ts, you can declare infrastructure resources directly in your source code.

For example, to define a Pub/Sub topic:

import { Topic } from "encore.dev/pubsub";

interface UserSignupEvent {

userID: string;

email: string;

}

export const UserSignups = new Topic<UserSignupEvent>("user-signups", {

deliveryGuarantee: "at-least-once",

});

// To publish:

await UserSignups.publish({ userID: "123", email: "[email protected]" });

"So which Pub/Sub technology does it use?", I hear you ask. All of them! The Encore Rust runtime includes implementations for most common Pub/Sub technologies, including AWS SQS+SNS, GCP Pub/Sub, and NSQ, with more planned (Kafka, NATS, Azure Service Bus, etc.). You can specify the implementation on a per-resource basis in the runtime configuration when the application boots up, or let Encore's Cloud DevOps automation handle it for you.

Beyond Pub/Sub, Encore.ts includes infrastructure integrations for PostgreSQL databases, Secrets, Cron Jobs, and more.

All of these infrastructure integrations are implemented in Encore.ts's Rust Runtime.

This means that as soon as you call .publish(), the payload is handed over to Rust which takes care to publish the message, retrying if necessary, and so on. Same thing goes with database queries, subscribing to Pub/Sub messages, and more.

The end result is that with Encore.ts, virtually all non-business-logic is off-loaded from the JS event loop.

In essence, with Encore.ts you get a truly multi-threaded backend "for free", while still being able to write all your business logic in TypeScript.

Conclusion

Whether or not this performance is important depends on your use case. If you're building a tiny hobby project, it's largely academic. But if you're shipping a production backend to the cloud, it can have a pretty large impact.

Lower latency has a direct impact on user experience. To state the obvious: A faster backend means a snappier frontend, which means happier users.

Higher throughput means you can serve the same number of users with fewer servers, which directly corresponds to lower cloud bills. Or, conversely, you can serve more users with the same number of servers, ensuring you can scale further without encountering performance bottlenecks.

While we're biased, we think Encore offers a pretty excellent, best-of-all-worlds solution for building high-performance backends in TypeScript. It's fast, it's type-safe, and it's compatible with the entire Node.js ecosystem. And it's all open-source, so you can check out the code and contribute on GitHub. Or just give it a try and let us know what you think!

Related Articles