Designing an algorithmic cloud infrastructure provisioning system

Defeating cloud clutter (a not-so-mythical beast)

Provisioning and configuring cloud infrastructure is tough. To begin with, each cloud provider offers a myriad of different products. Many of them do almost the same thing, only in subtly different ways.

For example, Google Cloud Platform lists seven official products for hosting applications, and the corresponding high-level chart compares 13 technical properties. But that's obviously just the tip of the iceberg. Combine this with a ton of database/data storage options and network configurations, and you're likely to suffer a small nervous breakdown.

The feature sprawl across the major cloud providers is hard to keep up with and the resulting combinatorial explosion can easily lead to choice paralysis. Yet what is perhaps less appreciated is the incredible minutiae you have to deal with just to deploy even the simplest of backend applications. The creative drain this represents is beastly, a mighty dragon indeed.

Since everyone loves being made aware of an annoying noise they had subconsciously blocked out, it's our great honor and privilege to bring the sheer magnitude of cloud clutter to your attention with this article. You're welcome.

But first, a cloud native digression

Our day job is building a backend development platform designed to help you create cloud native backend applications. Before you start muttering, "What does cloud native even mean?", let's just say it means applications that are designed from the ground up to leverage cloud infrastructure to its fullest. Such applications are, to a large extent, composed of cloud infrastructure services. We call those services cloud primitives.

Cloud primitives are the core building blocks of your application, such as services, databases, caches, PubSub topics, and scheduled jobs. Fully leveraging such primitives makes for incredibly scalable and reliable systems.

Unfortunately, going all in on cloud primitives has been a time-tested way to get absolutely nothing useful accomplished, at least any time soon. This is partly because it increases the complexity of your application, and partly because the developer experience is truly awful.

Models to train dragons

Freeing you from cloud complexity and poor developer experience is what we are working on at Encore. Through static analysis of your code, combined with service insights, we are able to infer your infrastructure requirements and automatically provision exactly what you need. You don't really have to do anything beyond writing your application business logic. Just sit back, relax, and watch the progress bar.

However, sometimes you have specific requirements that Encore is unable to infer automatically. You might for example want to use an existing database or SQL server. Or you may want to deploy your stuff to your own pre-existing Kubernetes cluster.

To support these use cases, and many more things, we are currently revising our infrastructure planning algorithm to allow for user-defined constraints, and many of the examples of cloud infrastructure insanity we're about to show you stemmed from this work.

So how does it work, you ask? The infrastructure computation is evaluated in three layers, starting with a very abstract representation of the infrastructure requirements and gradually adding more and more details.

We start with the Needs model, followed by the Entity model, and finally we reach the Resource model, which is the point where subjects spontaneously gouge their eyes out laugh uncontrollably as a coping mechanism.

The Need Model

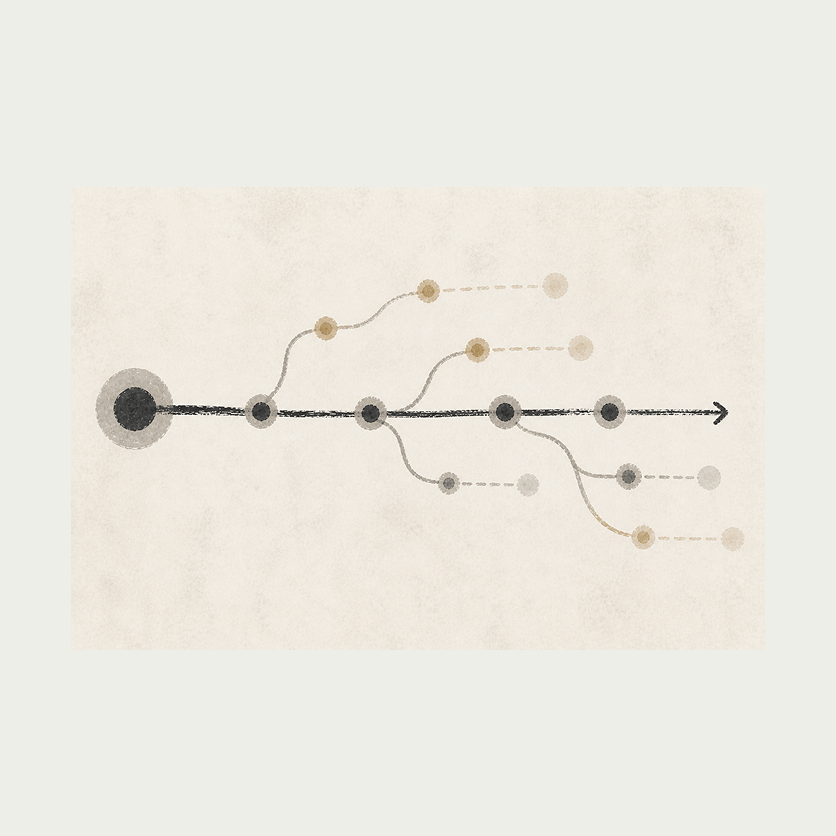

Pushing your code to Encore kicks off our CI/CD pipeline. Aside from compiling and testing your app, the build step also runs the static analysis to extract those infrastructure needs.

From a high-level perspective, this takes the form of a graph containing all your backend primitives and the links between them. You can see a simple example on the right.

The planning algorithms then calculate the difference between the currently deployed version of the application and the recently pushed changes. We call the output of this comparison the Need Model. The Need Model is flat, cloud agnostic, and expresses the environment-independent core needs of your application.

The Entity Model

Any new needs identified are added to a high-level model we call the Entity Model. The Entity model is a hierarchical graph of your application. It contains abstract representations of cloud-specific products and their relations. For each cloud, we've modeled supported cloud products as "providers" of entities that are capable of fulfilling your (every) need.

For example, a Cloud Run Container is a provider for Services, whereas a Cloud SQL Server is a provider for databases. The providers are wrapped in parent containers that encapsulate the capabilities of their children.

Each top-level node of the Entity Model is called a Root Entity. A Root Entity can for example be a GCP organization, an AWS account, or your existing external database which fulfills the need for a specific database.

Each provider level can use the Need Model, metrics, and knowledge of the current infrastructure topology to make informed proposals of which provider/product is best suited for your needs. If you have special requirements or crazy ideas preferences, you can add constraints by specifying your own infrastructure preferences.

You can for example instruct the planner to deploy a specific service to a Kubernetes cluster or provide a connection string to satisfy your particular database need.

The Resource Model

The Entity Model is then further refined into what we call the Resource Model. The Resource Model is pretty much a mapping of the high-level entities to lower-level cloud provider resources. This model is what is used by our provisioning system to create and modify the cloud resources which is necessary to host your app's building blocks.

The Resource Model is computed by mapping each entity in the Entity Model to a set of resources and/or updates to shared resources. This step is a bit more complex because the resources are sometimes indirectly coupled and can in the worst case affect each other. For example, a Cloud Run Container can for example only use one Serverless VPC Access connector, which impacts how Encore configures the connection to database servers and caches.

The general idea is similar to the Entity Model though: generate a list of resources that satisfy the needs of a bunch of higher-level entities.

This is fine

In the example depicted above, we use a boring app that had a very neat mapping between the Encore needs and the resulting cloud resources. Alas, this is unfortunately not always the case. Relatively small tweaks to the Entity Model quickly balloon into extremely complex Resource Models.

To illustrate this, let's move one of our Encore Services to run on a separate Cloud Run Container. Given this new constraint, the infrastructure planner happily computes a proposal for a new Entity and Resource Model which satisfies the Need Model.

Well, that escalated quickly, really that got out of hand fast! Where did all the colorful boxes come from? Well, since the services now run on separate Cloud Run Containers, we need to configure a bunch of additional IAM bindings (plus associated roles and accounts).

Now let's say we for some obscure reason want to run one of our databases on a separate Cloud SQL Server. To make things even more interesting we may as well shove it into a separate GCP Project and ditch the Cloud SQL Proxy. After crunching your demands, the planner generates yet another infrastructure proposal, and this time it's starting to look rather unwieldy.

We're moving the database to a separate GCP Project, and since we don't use Cloud SQL Proxy, we also need to configure the Cloud Run Container to use a Serverless VPC Access connector. We also configure a Shared VPC to virtually host both databases in the same VPC. On top of this, we need to dish out some more IAM bindings, configure Global Address, etc.

This is still an extremely simple app (albeit some would argue it has silly constraints), but the infrastructure configuration has already started to balloon out of proportion.

Fortunately for those of you using Encore, you don't really need to care. Encore does all the heavy lifting for you and will soon happily cater to all (or at least most) of your obscure, exotic, and slightly lunatic needs. Unfortunately for those of you working on Encore, this is your life now.

What's next

We're currently in the midst of fine-tuning the next generation of our planning algorithm and will eventually migrate our existing, more naive models to the shiny, handsome, and all in all superior replacement. This will yield a plethora of exciting new opportunities!

To begin with, we're working on extending our visual architecture tool, Encore Flow, to also give you a fabulous overview of your provisioned cloud infrastructure. We'll also be able to better visualize changes to your infrastructure, and eventually you'll be able to effortlessly plan and migrate services, databases, or whole apps between whatever products you fancy.

And if you don't want to bother, we'll automatically provision what's best for you, taking both cost and performance into account.

We'll tame the complexity dragon and disperse the cloud clutter, creating a brighter and sunnier future for us all.

More Articles