Distributed systems are often described as a double-edged sword. There is plenty of excellent content out there written on both why they suck and also why they are great. This is not one of those posts. I would say generally I am an advocate and believer in distributed systems where they make sense, but the goal of this blog post (and the two others that will follow) is to share some stories with you about where I have got something wrong within a distributed system that has led to a far reaching impact.

In this first post, I will share a mistake that I have seen made in multiple companies now that can lead to cascading failure. I call it the Kubernetes deep health check.

The Kubernetes Conundrum: A Tale of Liveness, Readiness, and the Pitfalls of Deep Health Checks

Kubernetes is a container orchestration platform. It is a popular option for folks building a distributed system and for good reason; it provides a sensible and cloud-native abstraction over infrastructure which makes it possible for developers to configure and run their applications without having to become a networking expert.

Kubernetes allows and encourages you to configure a few different types of probes; liveness,readiness and startup probes. Conceptually, these probes are simple and are described as follows:

- Liveness probes are used to tell kubernetes to restart a container. If the liveness probe fails, the application will restart. This can be used to catch issues such as a deadlock and make your application more available. My colleagues at Cloudflare have written about how we use this to restart “stuck” kafka consumers here.

- Readiness probes are only used for http based applications and are used to signal that a container is ready to start receiving traffic. A pod is considered ready to receive traffic when all containers are ready. If any container in a pod fails its readiness probe, it is removed from the service load balancer and will not receive any HTTP requests. Failing a readiness probe does not cause your pod to restart like failing a liveness probe does.

- Startup probes are generally recommended for legacy applications that take a while to start up. Until an application passes its startup probes, liveness and readiness probes are not considered.

For the rest of this post, we are going to zoom in on readiness probes for HTTP based applications.

When is my Application Ready?

This seems like a pretty simple question, right? “My application is ready when it can respond to the requests from a user”, you might respond. Let’s consider an application for a payment company that lets you check your balance in the app. When a user opens the mobile app, it makes a call to one of your many backend services. The service receiving the request is responsible for:

- Validating a user’s token by checking with an auth service.

- Calling the service that holds the balance.

- Emitting a

balance_viewedevent to kafka. - (via a different endpoint) allowing a user to lock their account, which updates a row in the service’s own Database.

Therefore, for our application to successfully service customers, you could argue that it is dependant on:

- The auth service being available.

- The balance service being available.

- Kafka being available.

- Our Database is available.

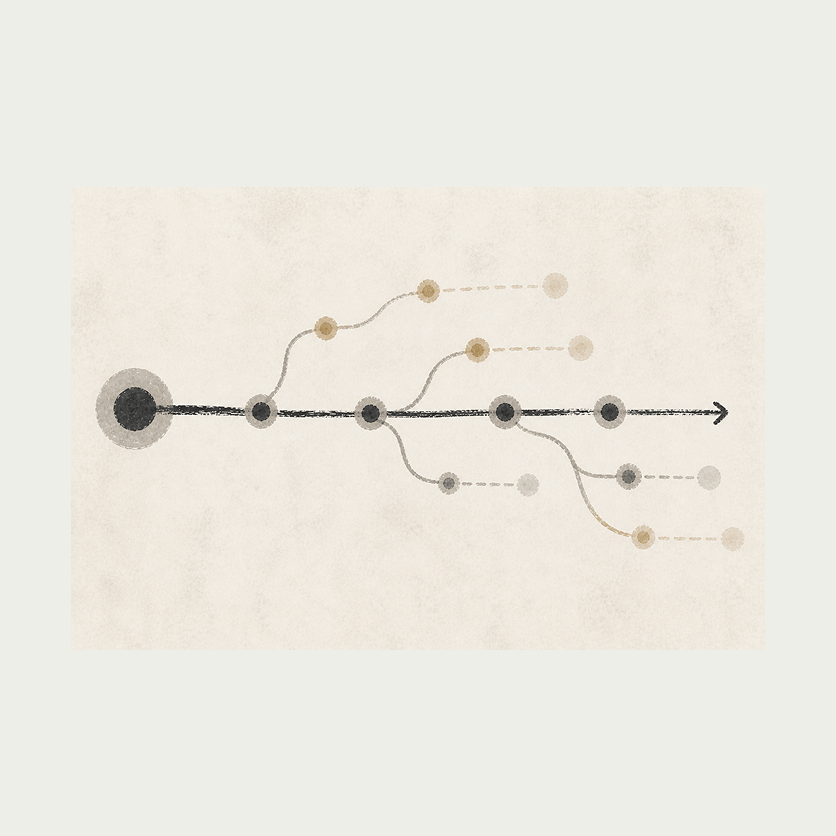

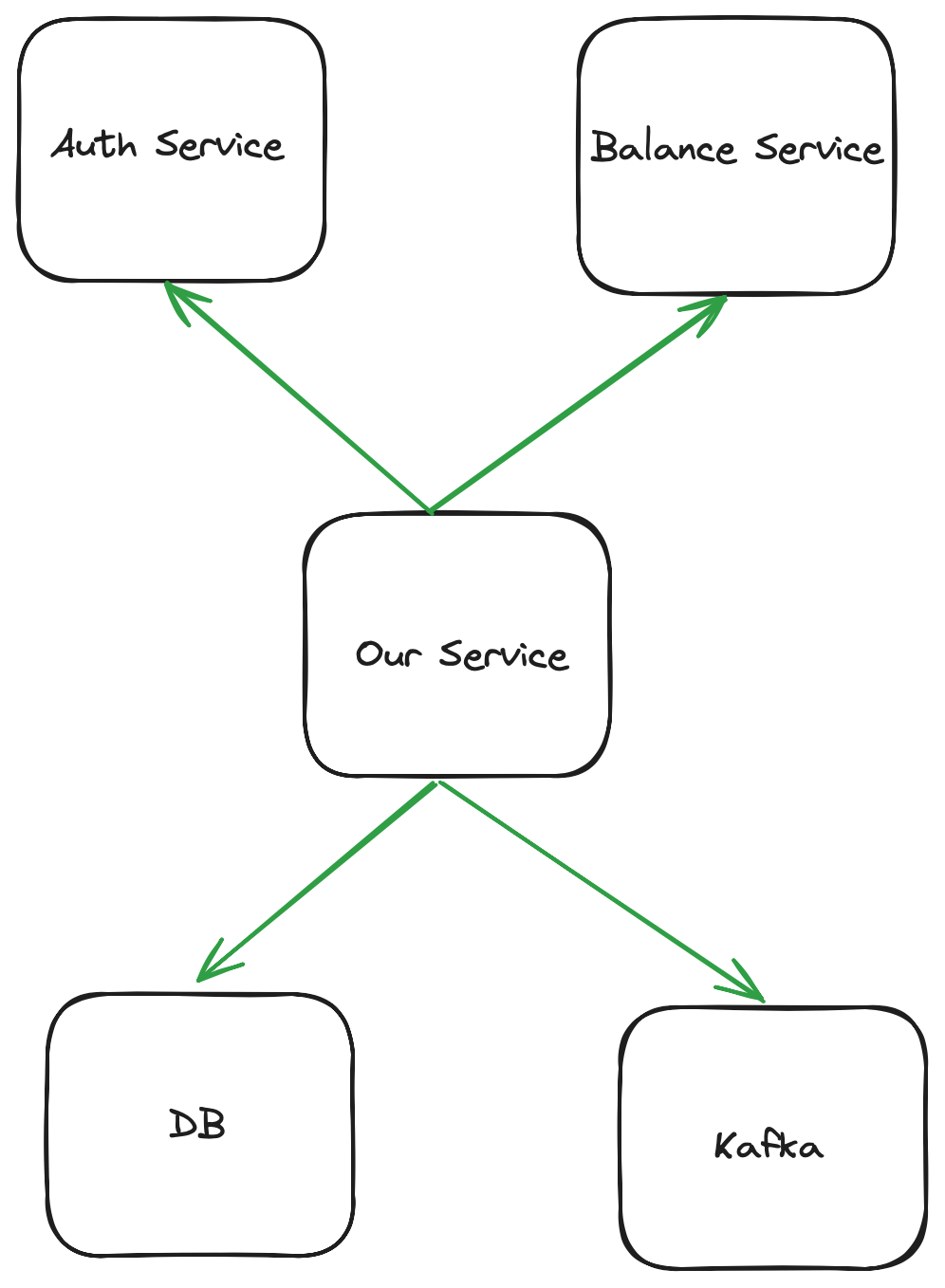

A graph of its dependencies will look something like this:

We could therefore write a readiness endpoint that returns JSON and a 200 when all the following are available:

{

"available":{

"auth":true,

"balance":true,

"kafka":true,

"database":true

}

}

In this instance, available could mean different things:

- For auth and balance, we check that we get a

200back from their readiness endpoint. - For kafka, we check that we can emit an event to a topic called healthcheck.

- For database we do

SELECT 1;

If any of these failed, we would return false for the JSON key, and return a HTTP 500 error. This is considered a readiness probe failure, and would cause kubernetes to remove this pod from the service load balancer. This might seem reasonable at first glance, but this can lead to cascading failure which arguably defeats one of the biggest benefits of microservices (isolated failure).

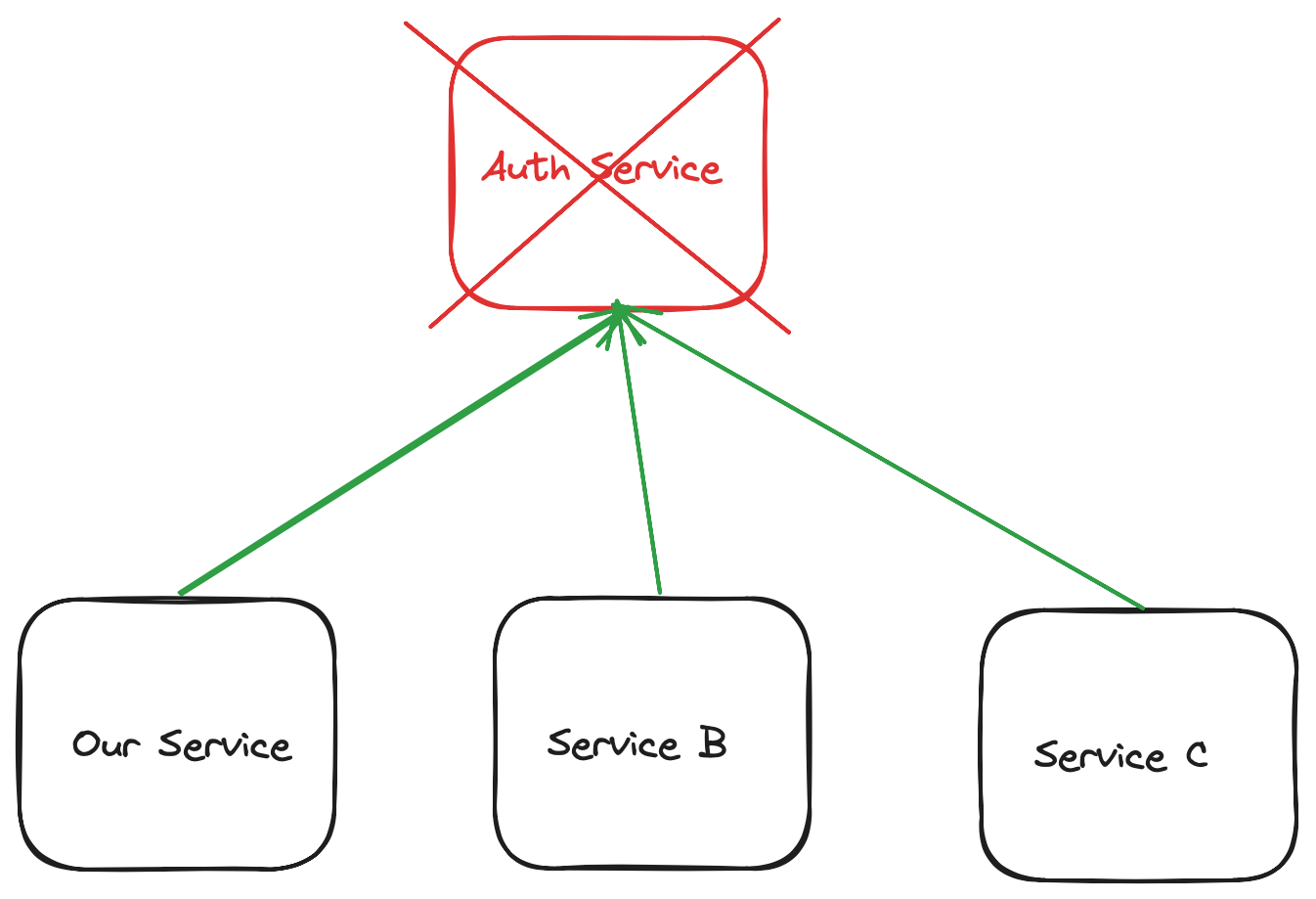

Imagine the following scenario where the auth service has gone down and all of the services in our company have it listed as a deep readiness check:

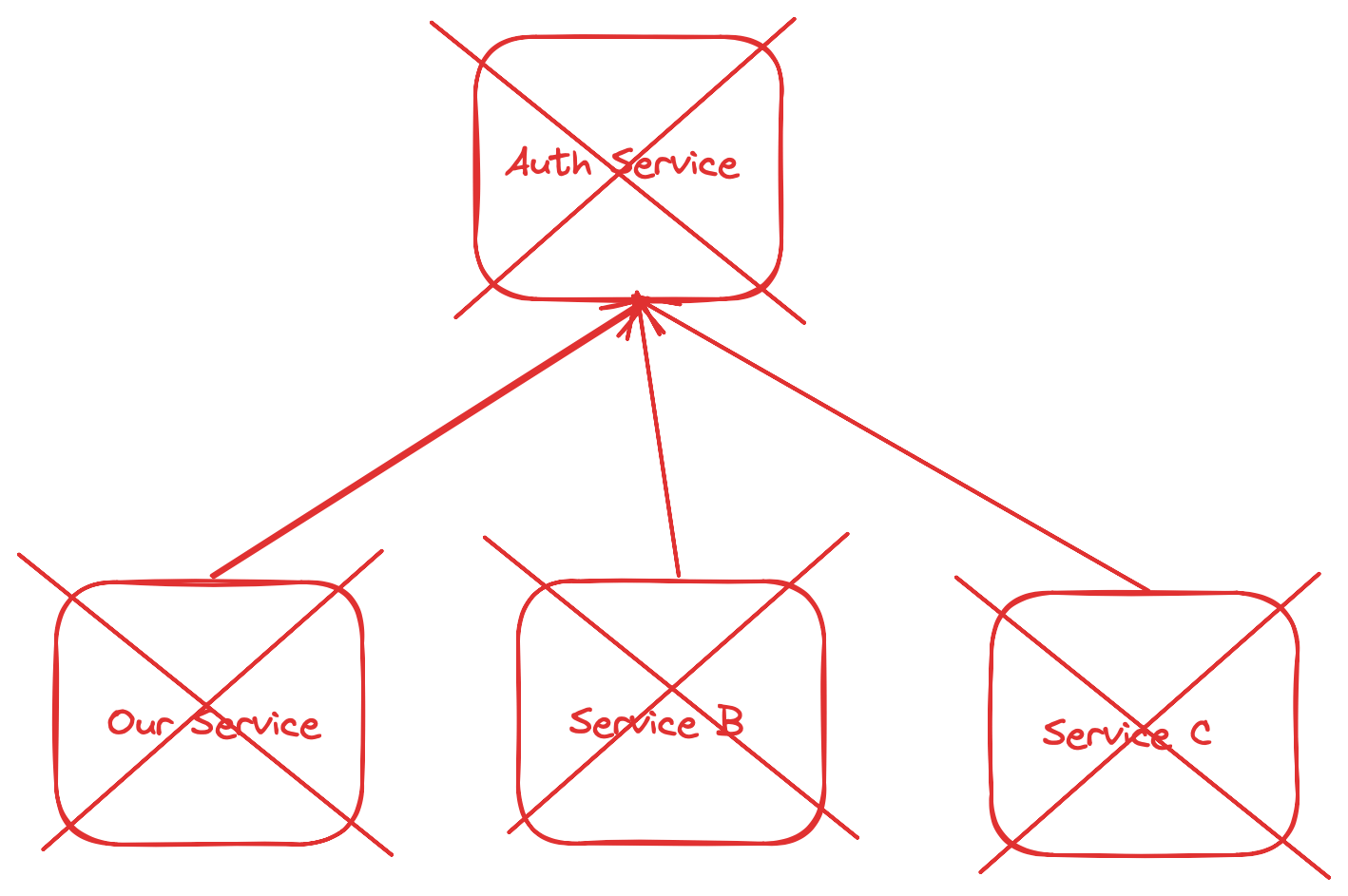

The failure of the auth service leads to all of our pods being removed from the load balancer for our service; we have a complete outage:

What’s worse is that we likely have very few metrics as to why this failure happened. Since requests are not reaching our pods, we can’t increment all the carefully placed prometheus metrics we added in our code and instead we need to look at all of the pods that are marked as not ready in our cluster.

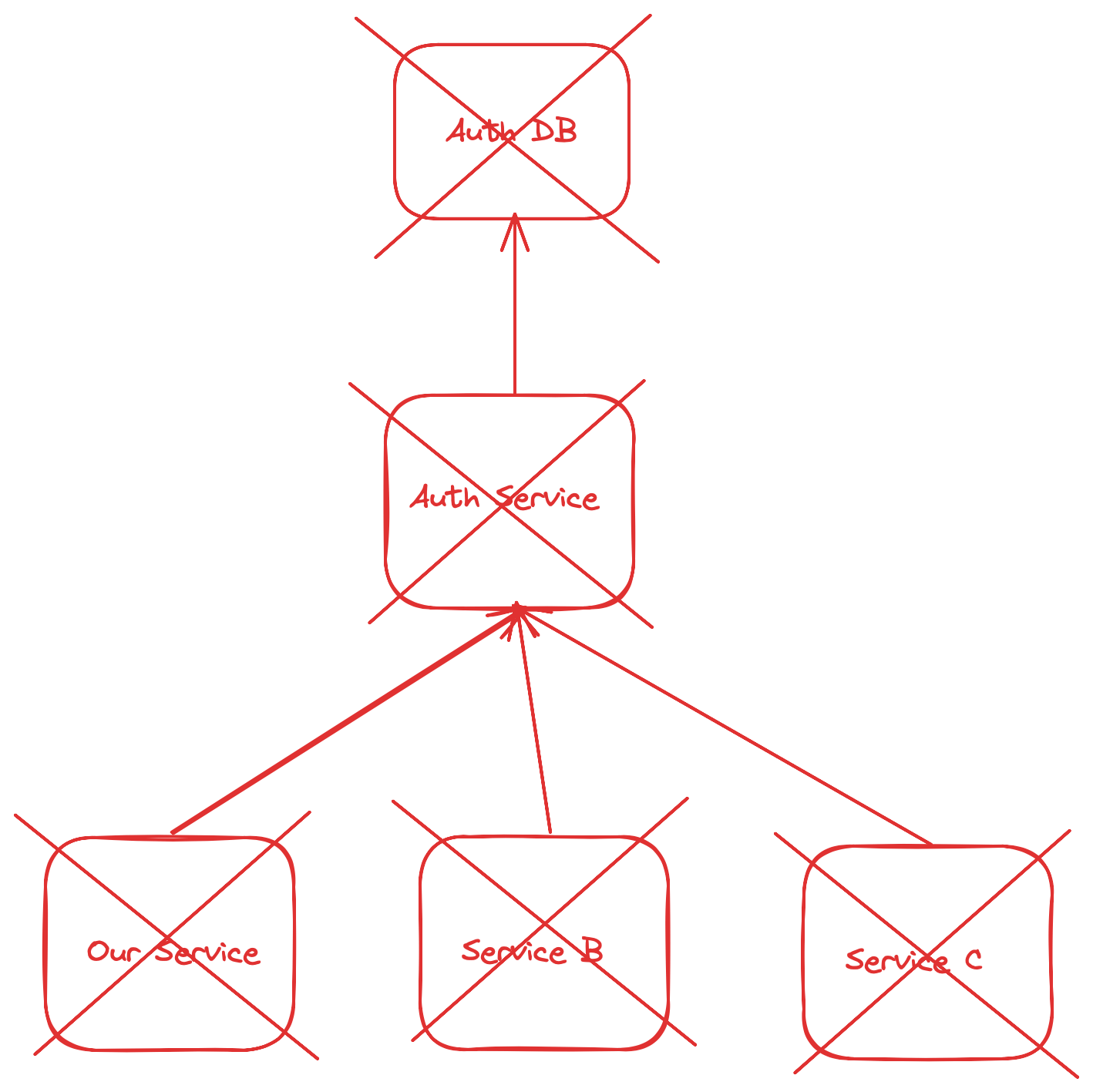

We then must hit their readiness endpoint to figure out which dependency is causing it and follow the tree; the auth service might be down because one of its own dependencies are down.

Something like this:

In the meantime, our users are seeing this:

upstream connect error or disconnect/reset before headers. reset reason: connection failure

Not a very friendly error message, right? We can and must do much better.

So, When is my Application Ready?

Your application is ready if it can serve a response. The response it serves might be a failure response, but that is still executing business logic. For example, if the auth service is down, we can (and should) firstly retry with some exponential back off, whilst incrementing a counter for the failure. If we can still not get a successful response, we should return a 5xx error code to our user and increment another counter. If either of these counters reach a threshold you deem unacceptable (as defined by your SLOs) you can declare a well-scoped incident.

In the meantime, there will (hopefully) be portions of your business that can continue to operate, as not everything was dependent on the service that went down.

Once the incident is resolved, we should consider whether our service needs that dependency and is there work we can do to remove it. Can we move to a more stateless model of auth? Should we use a cache? Can we circuit-break in some of the user flows? Should we carve some of the workflows that don’t need so many dependencies out into another service to isolate failure further in the future?

Wrapping Up

Based on conversations I have had, I expect this blog post to be quite divisive. Some folks will think I am an idiot for ever having implemented deep health checks as of course it would lead to cascading failure. Others will share this in their Slack channel and ask “are we doing readiness wrong?” where a senior engineer will show up and argue that their case is special and it makes sense for them (and maybe it does, I’d love to hear about your use case if so).

When we make things distributed, we add complexity. It's always worth being a pessimist and thinking with a failure-first mindset whenever you work on distributed systems. This approach isn't about expecting failure but being prepared for it. It's about understanding the interconnected nature of our systems and the ripple effects a single point of failure can have.

The key takeaway from my Kubernetes tale isn't to shun deep health checks but to use them carefully. Balance is crucial; we need to weigh the benefits of thorough health checks against the potential for widespread system impacts. Learning from our mistakes and those of others is what makes us better developers and more resilient in the face of system complexity. I share my story, in the hope that you share yours too.

I look forward to learning from you.

— Matt

About The Author

Matthew Boyle is an experienced technical leader in the field of distributed systems, specializing in using Go.

He has worked at huge companies such as Cloudflare and General Electric, as well as exciting high-growth startups such as Curve and Crowdcube.

Matt has been writing Go for production since 2018 and often shares blog posts and fun trivia about Go over on Twitter.

He's currently working on a course to help Go Engineers become masters at debugging. You can find more details of that here.

Related Articles