Echo is one of the more thoughtfully designed Go web frameworks. It returns errors from handlers instead of relying on panics, uses the standard context.Context, and has documentation that consistently gets praised by the community. If you've worked with Express in Node.js, Echo's API will feel familiar, but it stays closer to idiomatic Go than most alternatives.

Encore.go shares some of those idiomatic sensibilities, but the scope is different. Where Echo is a web framework focused on HTTP routing and middleware, Encore is a backend development platform that adds infrastructure automation on top of your application code. You declare databases, Pub/Sub topics, cron jobs, and services directly in Go, and Encore provisions them automatically in local development and production. The question isn't really which is "better" in the abstract, it's which scope matches what you're building.

Quick Comparison

| Aspect | Echo | Encore.go |

|---|---|---|

| Philosophy | Clean, idiomatic HTTP framework | Infrastructure-from-code backend platform |

| Type Safety | Struct tags + validator middleware | Compile-time typed request/response structs |

| Local Infrastructure | Configure yourself (Docker, env vars) | Automatic (databases, Pub/Sub, cron) |

| Learning Curve | Low, good docs | Low for APIs, moderate for infrastructure concepts |

| Ecosystem | Good middleware selection, smaller than Gin | Infrastructure primitives, service catalog, tracing |

| Observability | Manual setup with OpenTelemetry | Built-in distributed tracing, metrics, logs |

| AI Agent Compatibility | Manual configuration needed | Built-in infrastructure awareness |

| Best For | REST APIs, single-service applications | Distributed systems, multi-service backends |

The Basics: Defining an API

A simple GET endpoint that greets someone by name.

Echo

package main

import (

"net/http"

"github.com/labstack/echo/v4"

)

func main() {

e := echo.New()

e.GET("/hello/:name", func(c echo.Context) error {

name := c.Param("name")

return c.JSON(http.StatusOK, map[string]string{

"message": "Hello, " + name + "!",

})

})

e.Start(":8080")

}

Echo keeps things clean. You create an instance, define routes with handlers that return errors, and start the server. The echo.Context provides convenience methods like c.Param() for path parameters and c.JSON() for responses. There's no magic here, which is part of the appeal.

Encore.go

package hello

import "context"

type Response struct {

Message string `json:"message"`

}

//encore:api public method=GET path=/hello/:name

func Hello(ctx context.Context, name string) (*Response, error) {

return &Response{Message: "Hello, " + name + "!"}, nil

}

Encore uses a comment annotation to declare the endpoint. The function signature defines the API contract: path parameters are extracted from the function arguments, and the return type becomes the response schema. There's no server setup or routing configuration because Encore handles that.

Verdict: Echo's approach is explicit and familiar. You see every piece of the HTTP lifecycle. Encore trades that visibility for conciseness, with the framework handling server configuration and request binding based on function signatures. For a single endpoint, Echo is arguably clearer. As the number of endpoints grows, Encore's declarative style produces less boilerplate.

Type Safety and Validation

Echo

Echo integrates with go-playground/validator for struct-level validation through binding and validation tags:

package main

import (

"net/http"

"github.com/labstack/echo/v4"

"github.com/go-playground/validator/v10"

)

type CreateUserRequest struct {

Email string `json:"email" validate:"required,email"`

Name string `json:"name" validate:"required,min=1"`

Age int `json:"age" validate:"gte=0,lte=150"`

}

type User struct {

ID int `json:"id"`

Email string `json:"email"`

Name string `json:"name"`

Age int `json:"age"`

}

type CustomValidator struct {

validator *validator.Validate

}

func (cv *CustomValidator) Validate(i interface{}) error {

return cv.validator.Struct(i)

}

func main() {

e := echo.New()

e.Validator = &CustomValidator{validator: validator.New()}

e.POST("/users", func(c echo.Context) error {

req := new(CreateUserRequest)

if err := c.Bind(req); err != nil {

return err

}

if err := c.Validate(req); err != nil {

return echo.NewHTTPError(http.StatusBadRequest, err.Error())

}

user := &User{ID: 1, Email: req.Email, Name: req.Name, Age: req.Age}

return c.JSON(http.StatusCreated, user)

})

e.Start(":8080")

}

Echo separates binding (parsing the request body into a struct) from validation (checking the struct meets your constraints). You register a custom validator on the Echo instance, then call c.Bind() followed by c.Validate() in each handler. The validation rules live in struct tags, which is a common Go pattern. It works well, though you do need to wire up the validator yourself and remember to call both steps in every handler.

Encore.go

package user

import "context"

type CreateUserRequest struct {

Email string `json:"email"`

Name string `json:"name"`

Age int `json:"age"`

}

type User struct {

ID int `json:"id"`

Email string `json:"email"`

Name string `json:"name"`

Age int `json:"age"`

}

//encore:api public method=POST path=/users

func CreateUser(ctx context.Context, req *CreateUserRequest) (*User, error) {

return &User{ID: 1, Email: req.Email, Name: req.Name, Age: req.Age}, nil

}

Encore validates request data based on the struct types at compile time. The framework parses your Go types and generates validation code, so there's no separate validator setup or struct tags for validation rules. Request binding and validation happen automatically before your handler is called.

Verdict: Echo's validation through go-playground/validator is flexible and well-understood in the Go ecosystem. You can express complex constraints with struct tags and custom validators. Encore's approach is simpler since validation is automatic, but you have less control over custom validation rules. If you need fine-grained validation logic, Echo gives you more options. If you want zero-configuration request validation, Encore handles it out of the box.

Database Integration

Echo

Echo doesn't include database support, so you bring your own. Most teams use database/sql directly or an ORM like GORM or sqlx:

package main

import (

"database/sql"

"net/http"

"os"

"github.com/labstack/echo/v4"

_ "github.com/lib/pq"

)

var db *sql.DB

func main() {

var err error

db, err = sql.Open("postgres", os.Getenv("DATABASE_URL"))

if err != nil {

panic(err)

}

defer db.Close()

e := echo.New()

e.GET("/users/:id", func(c echo.Context) error {

id := c.Param("id")

var name, email string

err := db.QueryRowContext(c.Request().Context(),

"SELECT name, email FROM users WHERE id = $1", id,

).Scan(&name, &email)

if err != nil {

return echo.NewHTTPError(http.StatusNotFound, "user not found")

}

return c.JSON(http.StatusOK, map[string]string{

"id": id, "name": name, "email": email,

})

})

e.Start(":8080")

}

You manage the connection string, connection pool, and lifecycle yourself. For local development, you typically start PostgreSQL with Docker and set the DATABASE_URL environment variable. Migrations are handled by a separate tool like golang-migrate or goose.

Encore.go

package user

import (

"context"

"encore.dev/storage/sqldb"

)

type User struct {

ID int `json:"id"`

Name string `json:"name"`

Email string `json:"email"`

}

var db = sqldb.NewDatabase("users", sqldb.DatabaseConfig{

Migrations: "./migrations",

})

//encore:api public method=GET path=/users/:id

func GetUser(ctx context.Context, id int) (*User, error) {

var user User

err := db.QueryRow(ctx,

"SELECT id, name, email FROM users WHERE id = $1", id,

).Scan(&user.ID, &user.Name, &user.Email)

return &user, err

}

The sqldb.NewDatabase call declares that this service needs a PostgreSQL database. When you run encore run, Encore provisions a local PostgreSQL instance, creates the database, and runs your migration files automatically. No Docker, no connection strings, no environment variables. The same declaration also drives provisioning in production, where Encore creates an RDS or Cloud SQL instance in your cloud account.

Verdict: The SQL code itself is similar in both cases, standard Go database patterns. The difference is everything around it. With Echo, you manage database lifecycle, connection strings, local setup, and migrations tooling. With Encore, you declare the database and the framework handles the rest. For projects with one database, the Echo approach is manageable. For projects with multiple services each owning their own database, Encore's automation removes a lot of operational overhead.

Middleware and Authentication

Echo

Echo has a clean middleware system where middleware functions return errors, just like handlers:

package main

import (

"net/http"

"strings"

"github.com/labstack/echo/v4"

"github.com/labstack/echo/v4/middleware"

)

func main() {

e := echo.New()

// Built-in middleware

e.Use(middleware.Logger())

e.Use(middleware.Recover())

e.Use(middleware.CORS())

// Custom authentication middleware

authMiddleware := func(next echo.HandlerFunc) echo.HandlerFunc {

return func(c echo.Context) error {

token := c.Request().Header.Get("Authorization")

token = strings.TrimPrefix(token, "Bearer ")

if token == "" {

return echo.NewHTTPError(http.StatusUnauthorized, "missing token")

}

userID, err := validateToken(token)

if err != nil {

return echo.NewHTTPError(http.StatusUnauthorized, "invalid token")

}

c.Set("userID", userID)

return next(c)

}

}

// Public route

e.GET("/health", func(c echo.Context) error {

return c.JSON(http.StatusOK, map[string]string{"status": "ok"})

})

// Protected routes

api := e.Group("/api", authMiddleware)

api.GET("/profile", func(c echo.Context) error {

userID := c.Get("userID").(string)

return c.JSON(http.StatusOK, map[string]string{"userID": userID})

})

e.Start(":8080")

}

func validateToken(token string) (string, error) {

// Token validation logic

return "user-123", nil

}

Echo's middleware pattern is consistent with its handler design: everything returns an error. The echo.Context carries data between middleware and handlers through c.Set() and c.Get(). Route groups make it straightforward to apply middleware to specific paths. It's a well-thought-out system that feels natural.

Encore.go

Encore uses a dedicated auth handler annotation and typed auth data:

package auth

import (

"context"

"strings"

"encore.dev/beta/auth"

"encore.dev/beta/errs"

)

type AuthData struct {

UserID string

}

//encore:authhandler

func AuthHandler(ctx context.Context, token string) (auth.UID, *AuthData, error) {

token = strings.TrimPrefix(token, "Bearer ")

if token == "" {

return "", nil, &errs.Error{

Code: errs.Unauthenticated,

Message: "missing token",

}

}

userID, err := validateToken(token)

if err != nil {

return "", nil, &errs.Error{

Code: errs.Unauthenticated,

Message: "invalid token",

}

}

return auth.UID(userID), &AuthData{UserID: userID}, nil

}

Then any endpoint that requires authentication uses the auth access level:

package profile

import (

"context"

myauth "encore.app/auth"

"encore.dev/beta/auth"

)

//encore:api auth method=GET path=/profile

func GetProfile(ctx context.Context) (*myauth.AuthData, error) {

data := auth.Data().(*myauth.AuthData)

return data, nil

}

The auth handler is defined once and applies to every endpoint marked with auth. The AuthData struct is available in any service that needs it, and it's type-safe since you're working with a concrete Go struct instead of pulling untyped values from a context map.

Verdict: Echo's middleware system is flexible and well-designed. You can compose middleware freely, apply it to route groups, and build custom chains for different paths. Encore's auth handler is more structured, with a single auth definition that provides type-safe auth data across all services. Echo gives you more flexibility in how authentication is applied. Encore provides stronger guarantees that auth data is consistent and typed across your entire application. For single-service applications, Echo's approach is perfectly adequate. For multi-service architectures, Encore's centralized, typed auth handler avoids the common problem of reimplementing auth middleware in each service.

Observability

Echo

Echo provides request logging through middleware, and you can integrate OpenTelemetry for distributed tracing:

package main

import (

"github.com/labstack/echo/v4"

"github.com/labstack/echo/v4/middleware"

"go.opentelemetry.io/contrib/instrumentation/github.com/labstack/echo/otelecho"

"go.opentelemetry.io/otel"

"go.opentelemetry.io/otel/exporters/otlp/otlptrace"

"go.opentelemetry.io/otel/sdk/trace"

)

func main() {

// Set up OpenTelemetry exporter

exporter, _ := otlptrace.New(context.Background(),

otlptrace.WithEndpoint("otel-collector:4317"),

)

tp := trace.NewTracerProvider(trace.WithBatcher(exporter))

otel.SetTracerProvider(tp)

e := echo.New()

e.Use(middleware.Logger())

e.Use(otelecho.Middleware("my-service"))

e.GET("/users/:id", getUser)

e.Start(":8080")

}

The otelecho middleware instruments incoming requests, but you still need to configure an exporter, set up a collector (like Jaeger or Grafana Tempo), and manually instrument database calls, outgoing HTTP requests, and any other operations you want to trace. It's a real project to get comprehensive observability working, even with the OpenTelemetry ecosystem.

Encore.go

package user

import (

"context"

"encore.dev/storage/sqldb"

)

var db = sqldb.NewDatabase("users", sqldb.DatabaseConfig{

Migrations: "./migrations",

})

//encore:api public method=GET path=/users/:id

func GetUser(ctx context.Context, id int) (*User, error) {

var user User

err := db.QueryRow(ctx,

"SELECT id, name, email FROM users WHERE id = $1", id,

).Scan(&user.ID, &user.Name, &user.Email)

return &user, err

}

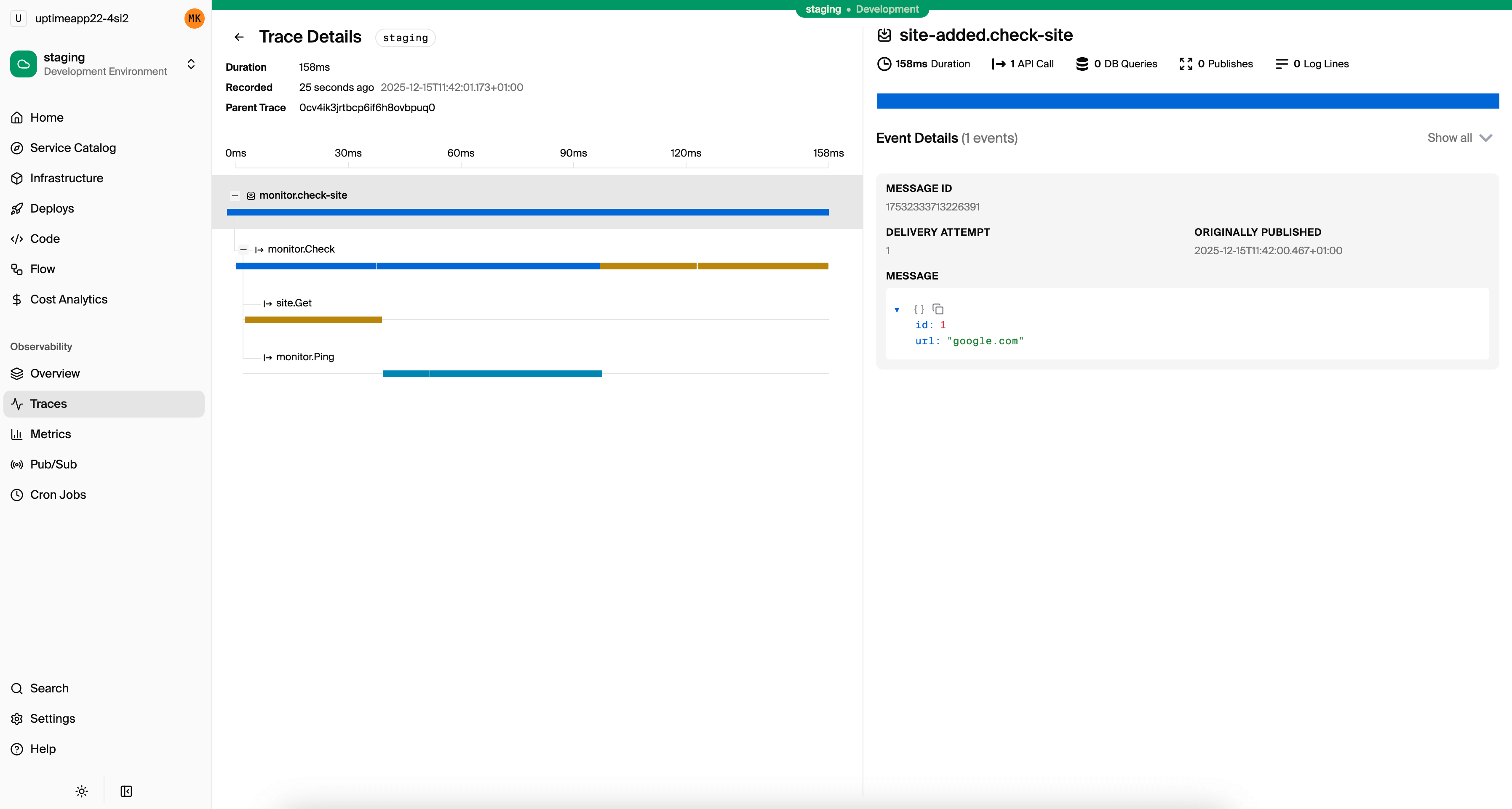

There's nothing special in the code. Encore traces every request end-to-end automatically. Database queries, service-to-service calls, and Pub/Sub messages all appear in traces without any instrumentation code. The local development dashboard shows traces immediately when you run encore run.

Verdict: Echo can achieve good observability, but it requires meaningful effort: choosing and configuring an OpenTelemetry exporter, running a collector, instrumenting database drivers, and wiring it all together. Encore provides distributed tracing, structured logging, and metrics out of the box with zero configuration. If observability is important to your project and you'd rather not spend days setting it up, Encore has a significant advantage.

Microservices and Service Communication

Echo

With Echo, each service is a standalone application with its own main() function and its own HTTP server:

// users-service/main.go

package main

import (

"net/http"

"github.com/labstack/echo/v4"

)

func main() {

e := echo.New()

e.GET("/users/:id", func(c echo.Context) error {

id := c.Param("id")

return c.JSON(http.StatusOK, map[string]string{

"id": id, "name": "Alice",

})

})

e.Start(":3001")

}

// orders-service/main.go

package main

import (

"encoding/json"

"fmt"

"net/http"

"os"

"github.com/labstack/echo/v4"

)

func main() {

e := echo.New()

e.POST("/orders", func(c echo.Context) error {

var req struct {

UserID string `json:"userId"`

}

if err := c.Bind(&req); err != nil {

return err

}

// Manual HTTP call to users service

usersURL := os.Getenv("USERS_SERVICE_URL")

resp, err := http.Get(fmt.Sprintf("%s/users/%s", usersURL, req.UserID))

if err != nil {

return echo.NewHTTPError(http.StatusServiceUnavailable, "users service unavailable")

}

defer resp.Body.Close()

var user map[string]string

json.NewDecoder(resp.Body).Decode(&user)

return c.JSON(http.StatusCreated, map[string]interface{}{

"orderId": 1,

"user": user,

})

})

e.Start(":3002")

}

You manage service URLs through environment variables, handle HTTP errors and retries manually, and parse responses without type safety. As the number of services grows, the boilerplate for inter-service communication adds up. You also need to run each service separately during local development and configure service discovery for production.

Encore.go

// users/users.go

package users

import "context"

type User struct {

ID string `json:"id"`

Name string `json:"name"`

}

//encore:api public method=GET path=/users/:id

func Get(ctx context.Context, id string) (*User, error) {

return &User{ID: id, Name: "Alice"}, nil

}

// orders/orders.go

package orders

import (

"context"

"encore.app/users"

)

type CreateOrderRequest struct {

UserID string `json:"userId"`

}

type Order struct {

OrderID int `json:"orderId"`

User *users.User `json:"user"`

}

//encore:api public method=POST path=/orders

func CreateOrder(ctx context.Context, req *CreateOrderRequest) (*Order, error) {

user, err := users.Get(ctx, req.UserID)

if err != nil {

return nil, err

}

return &Order{OrderID: 1, User: user}, nil

}

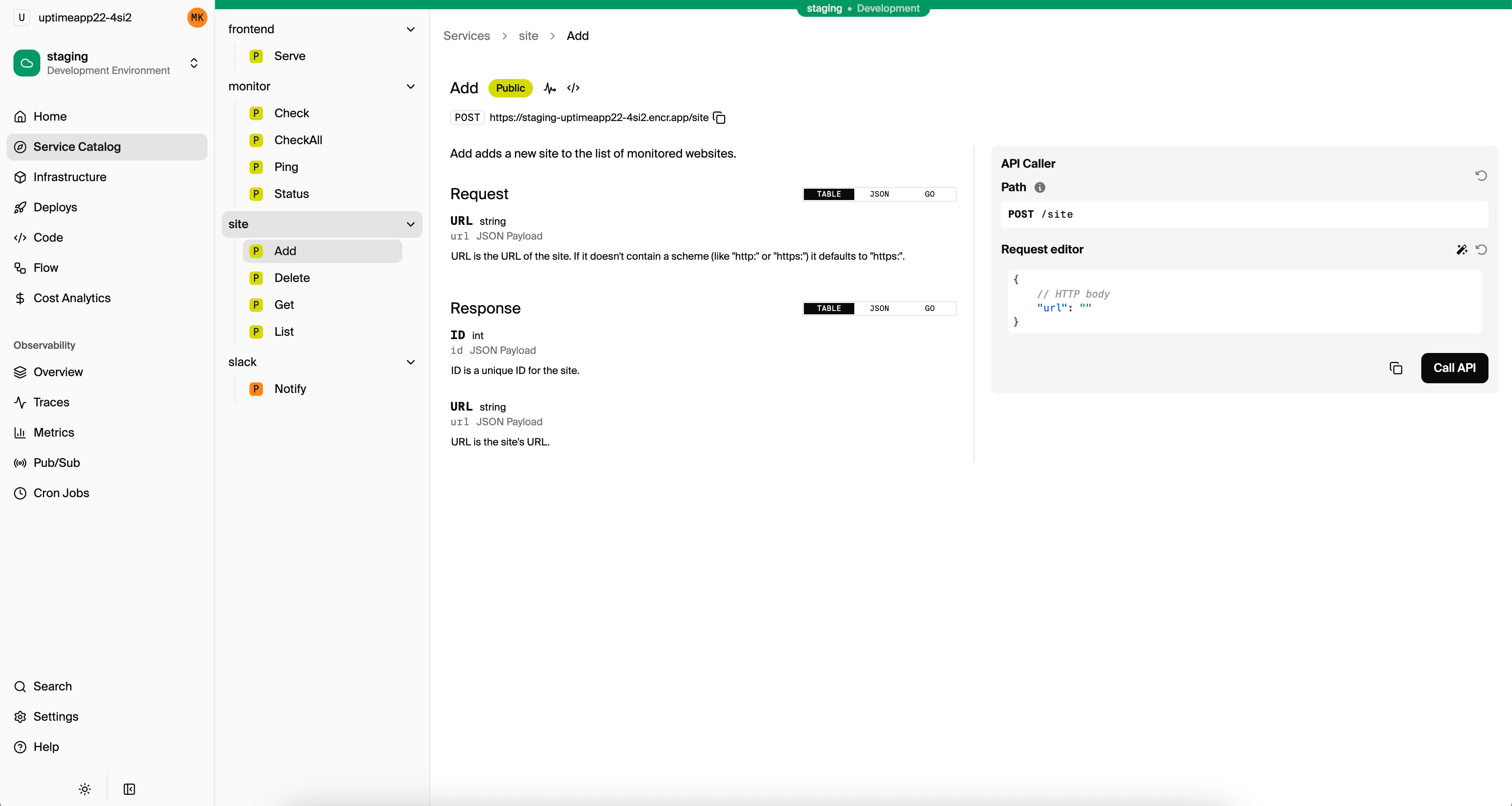

Calling another service is a regular Go function call. The types are shared at compile time, so if the users service changes its response type, the orders service gets a compile error. Encore handles service discovery, request serialization, and tracing correlation automatically. Running encore run starts all services together, and you can see how they communicate in the service catalog.

Verdict: This is where the difference between a web framework and a backend platform becomes most apparent. Echo gives you HTTP routing and leaves service communication entirely to you, including URLs, serialization, error handling, retries, and service discovery. Encore makes service calls feel like local function calls with compile-time type checking. For single-service applications, this distinction doesn't matter. For multi-service architectures, Encore removes a substantial amount of infrastructure plumbing.

AI Code Generation

Go has strong opinions about many things, but how you structure a backend isn't one of them. That's usually fine for developers, but AI agents are the inverse: good at filling in details once a structure exists, bad at deciding what that structure should be.

Echo

When an AI agent writes code for an Echo project, it has to make architectural decisions on every prompt: how to set up the custom validator, whether to use c.Bind() then c.Validate() or combine them, which error handling pattern to follow, how to structure middleware chains, where to register routes. The output works, but it varies between prompts, and the agent spends most of its effort on plumbing rather than your actual business logic.

// An agent asked to "add an order creation endpoint" might generate:

// - A new route registration or modify an existing group

// - A fresh validator setup or a different validation approach each time

// - Error responses using echo.NewHTTPError, c.JSON, or a custom error handler

// - Manual request binding with a different pattern than the rest of the codebase

// - Middleware wiring that doesn't match existing conventions

Encore.go

With Encore, the project's API patterns, infrastructure declarations, and service structure are already defined. The agent reads the existing conventions and writes code that follows them. A prompt to "add an order creation endpoint" produces a function with an //encore:api annotation and a database call using the existing sqldb declaration, not a new architecture.

// The agent sees existing patterns and follows them:

//encore:api auth method=POST path=/orders

func Create(ctx context.Context, req *CreateOrderRequest) (*Order, error) {

// Business logic only — the agent doesn't reinvent the structure

var order Order

err := db.QueryRow(ctx,

"INSERT INTO orders (customer_id, total) VALUES ($1, $2) RETURNING id, customer_id, total",

req.CustomerID, req.Total,

).Scan(&order.ID, &order.CustomerID, &order.Total)

return &order, err

}

Encore also provides an MCP server and editor rules (encore llm-rules init) that give agents access to database schemas, distributed traces, and service architecture. This means agents can generate queries that match your actual tables, debug with real request data, and verify their own work. Read more in How AI Agents Want to Write Go.

Verdict: Echo requires the agent to make architectural decisions on every prompt, which produces working but inconsistent output. Encore gives agents conventions to follow and live system context through MCP, which leads to more consistent, deployable code.

When to Choose Echo

Echo is a solid choice when:

- You're building a single-service API and don't need infrastructure automation. Echo's clean API and good documentation make it productive for focused projects.

- You want a familiar, idiomatic Go experience. Echo's use of

context.Contextand error returns feels natural to Go developers. It doesn't impose unusual patterns. - You have existing infrastructure. If your team already has database provisioning, deployment pipelines, and observability tooling in place, Echo slots in without asking you to change anything.

- You need maximum flexibility in middleware composition. Echo's middleware system is well-designed and lets you build custom authentication, logging, and request processing chains.

- You prefer explicit control over every layer. Some teams want to see and manage every HTTP detail. Echo provides that transparency.

When to Choose Encore.go

Encore.go makes sense when:

- You're building a distributed system with multiple services. Type-safe service calls, automatic service discovery, and shared types across services eliminate a category of bugs and boilerplate.

- Local infrastructure automation matters. If you don't want to manage Docker containers, connection strings, and environment variables just to run your application locally, Encore handles all of that.

- You want built-in observability from day one. Distributed tracing across services, database queries, and Pub/Sub messages works without configuring OpenTelemetry or running a collector.

- You need databases, Pub/Sub, or cron jobs. Encore's infrastructure primitives let you declare these in code and have them provisioned automatically in every environment.

- You want to deploy to your own AWS or GCP account with infrastructure provisioned automatically. Encore Cloud takes your code and creates real cloud resources in your account.

- You're using AI coding agents and want them to generate backend code that includes infrastructure. Encore's declarative infrastructure model gives AI agents enough context to produce deployable code, not just HTTP handlers.

Getting Started

Try both with a small project to see which tradeoffs matter for your situation:

- Echo Guide

- Encore.go Quickstart

- Encore.go REST API Tutorial to build a complete application with a database

You might also find the Best Go Backend Frameworks comparison helpful for a broader perspective on the Go ecosystem.

Deploy a working example to see how Encore compares in practice:

Want to jump straight to a running app? Clone this starter and deploy it to your own cloud.

Have questions about choosing a framework? Join our Discord community where developers discuss architecture decisions daily.